Contemporary Times and the 1920s

Undoubtedly, the most fascinating aspect of the 1920s for me has been how similar contemporary times and the 1920s are.

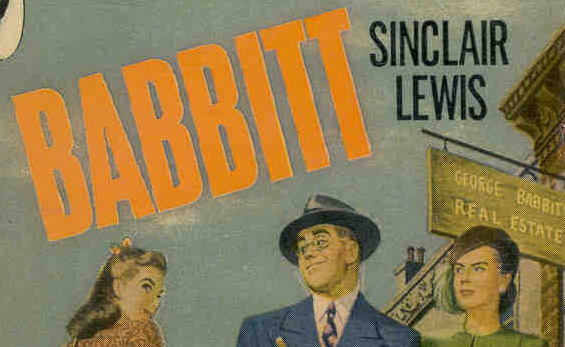

“What did this man want?” – Tagline from the cover of Babbitt

From everything I’ve been reading, it feels like the 1920s is where “American” society came from. All the extremes, the attitudes, the swings of the pendulum, the craziness… (Granted, when you look at other countries and their dictatorships and cults of personality, it isn’t really that crazy. But it’s crazy for a bunch of upstart Protestants from the wilds of England… Right?)

I really feel like most of our attitudes, the way our society thinks, feels, and reacts–they’ve all honed themselves from this one significant decade. It all seems so familiar now. Not just right now, but in everything that’s happened since the 1920s.